Guest post by Jeff Mosenkis of Innovations for Poverty Action.

- Data and computer algorithms are playing a part in policy decisions, like bail or parole recommendations based on a computer’s recidivism guesses. Pro Publica and WNYC have an interesting series on “Machine Bias” – what happens when data-based algorithms are mistaken. They’re crowdsourcing a test of how accurate what Facebook thinks it knows about you is. Use their Chrome extension to help anonymously. (h/t Alex Goldmark)

- Some practical tips from my financial inclusion colleagues for setting up conferences where academics and real-world types actually communicate (it’s short and you can skip to the bullet points).

- Brazil is starting “race committees” to determine who has enough African heritage to qualify for affirmative action. One government job applicant tried to prove he was Black enough, he:

went to seven dermatologists who used something called the Fitzpatrick scale that grades skin tone from one to seven, or whitest to darkest. The last doctor even had a special machine.

“Apparently on my face I’m a Type 4. Which would be like Jennifer Lopez or Dev Patel, Frida Pinto [sic] or John Stamos. On my limbs I would be Type 5, which is Halle Berry, Will Smith, Beyonce and Tiger Woods,” he said.

- World Bank Presiden Jim Yong Kim plans on naming and shaming countries with high child growth stunting (e.g. malnutrition) who aren’t using proving methods for addressing the massive problem:

The problem is huge. In India 38.7% of children are stunted, in Pakistan 45% and in the Democratic Republic of the Congo 70%.

It becomes even worse when you consider the future of work is in skilled jobs, and kids in poor countries are starting out with lifetime cognitive deficits.

- Some good news, a Lancet study finds 78% of diarrheal disease is caused by six bugs, which may make treatment easier.

- New Book: Impact Evaluation in Practice, Second Edition is available FREE here.

- There’s been a spate of schools being burned down in Kenya. An anthropologist suggests the reason is students are protesting prison-like conditions and have learned from the political realm that the government only listens to protests when they’re a threat to public safety. (h/t Lee Crawfurd)

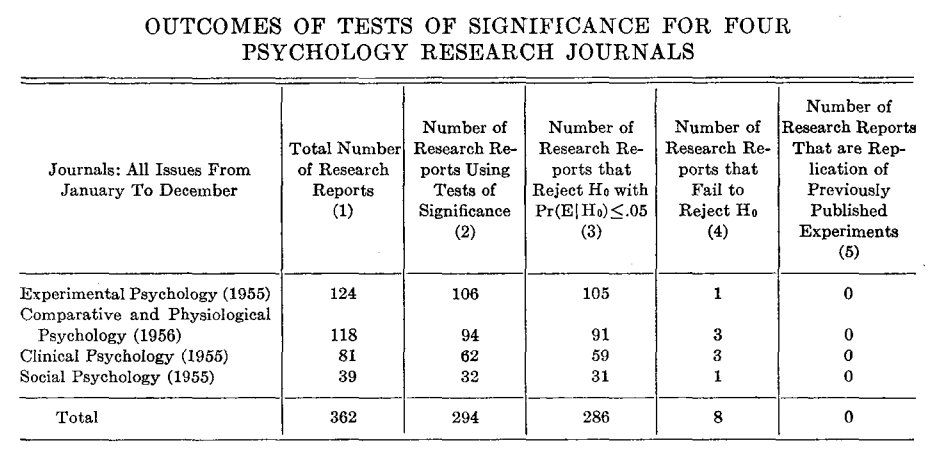

Neuroscientist and data guy John Borghi points to one table from 1959 that explains psychology’s current replication crisis*:

* (Many more fields have replication crises, psychology’s the one that seems to be dealing with it)

One Response

Some practical tips from my financial inclusion colleagues for setting up conferences where academics and real-world types actually communicate (it’s short and you can skip to the bullet points).

golu dolls

golu dolls